Email marketing remains one of the most cost‑effective channels for engaging customers and driving revenue.

But sending a newsletter without testing its impact is like launching a new product without quality control.

This is where split testing comes in.

Understanding what split testing is in email marketing helps you stop guessing and start improving fast.

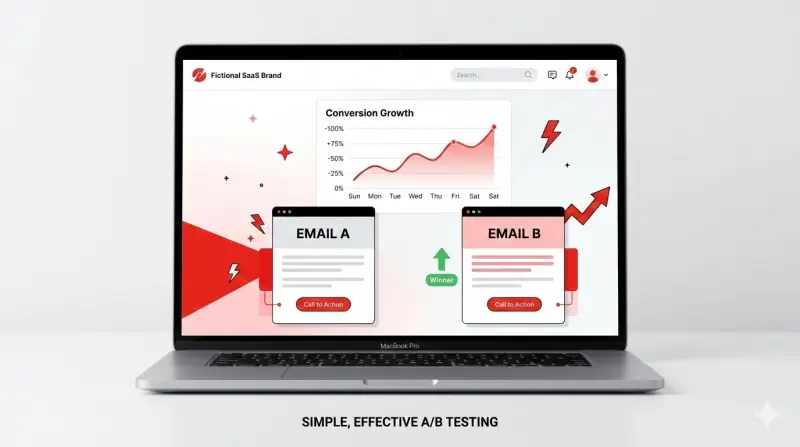

Put simply, split testing, also known as A/B testing, helps marketers move beyond guesswork and use data to optimize everything from subject lines to call‑to‑action (CTA) buttons.

This article explains what split testing is, why it matters, and how systematic experimentation can substantially increase conversions.

What is Split Testing in Email Marketing?

Split testing involves sending two or more versions of a digital asset to different segments of your audience and comparing the results. It is a process where you compare two or more versions of a webpage, email, or digital asset to see which one drives better results.’

Each version differs only by a single element, such as the headline, image, or send time, so that any performance difference can be attributed to that change.

The goal is to learn what resonates with your audience and apply those insights to future campaigns.

While the terms “split testing” and “A/B testing” are often used interchangeably, they arose from different contexts. A/B testing originally described experiments in which two versions were compared without splitting traffic across separate URLs.

Split testing, on the other hand, traditionally meant splitting traffic between two URLs (landing pages A and B).

What matters is that your audience receives different variants at random, that results are measured accurately, and that the winning version is used going forward.

Why Split Test Emails?

Guessing at what will engage subscribers can waste money and time. Thus, removes the guesswork and ensures you use data to determine what actually works.

When you test subject lines, content, or CTAs, you can answer three critical questions:

- Does this change improve open rates?

- Does it increase click‑throughs?

- Does it drive more sales?

Split testing offers several tangible benefits:

Higher Engagement and Conversions

Testing shows what content, wording, or design your audience likes best. When you find what works, open rates, clicks, and sales go up. Even small changes, like tweaking a subject line or button color, can make a big difference.

Make Decisions Based on Data, Not Guesswork

Instead of relying on your gut feeling, you can see what actually works. Using real data from your subscribers helps you make smarter, safer decisions.

Better Experience for Your Readers

Split testing shows which layout or design is easier to read and more enjoyable. When emails are simple and clear, your subscribers are happier and more likely to stick around.

Lower Risk and Cost

Rather than changing your entire email strategy at once, you test small changes first. This way, you improve results step by step. It’s cheaper than the cost of acquiring new subscribers, and it helps you avoid mistakes.

What Are the Key Elements to Test?

Effective split testing requires focusing on elements that significantly influence engagement. The following components are prime candidates for experimentation:

| Element | What to Test | Why It Matters |

| Subject Line | Length (short vs. long), personalization (FirstName or other dynamic fields), tone, structure (questions vs. statements), inclusion of emojis or punctuation | The subject line acts as the gatekeeper. Testing it can result in higher open rates and improved conversion rates. |

| Preview Text/Preheader | Reinforcing vs. contrasting the subject line, plain copy vs. emojis, teasing content vs. restating value, and including a CTA | Preview text is a second chance to persuade someone to open an email; subtle changes here can meaningfully shift open rates. |

| Sender Name | Brand name vs. individual name, hybrid (e.g., “Laura at Company”), different senders for different campaign types | Trust is critical. Testing sender names helps find the combination that feels most authentic to your audience. |

| Content and Layout | Long vs. short copy, storytelling vs. bullet points, placement of images, tone (formal vs. casual) | These elements influence readability and engagement. Aligning the message structure with audience preferences improves click‑through rates. |

| Call‑to‑Action (CTA) | Button text (“Buy now” vs. “Learn more”), color, placement (top vs. bottom), size | CTAs directly impact click‑through rates. Because CTAs determine whether readers take the next step, 85 % of businesses prioritize testing them. |

| Send Time and Day | Morning vs. evening sends; weekdays vs. weekends | The timing of an email influences whether recipients see and open it. Testing send times helps identify when your audience is most responsive. |

These elements should be tested one at a time. Also, caution against changing multiple variables simultaneously; otherwise, you can’t tell which change drove the improvement.

Remember, structured experiments reveal which component truly moves the needle.

How to Conduct an Email Split Test?

Before you dive into testing, it helps to think of split testing as a mini experiment.

Each test provides insights into your audience’s preferences, helping you make smarter decisions rather than relying on guesswork.

Here’s a step-by-step guide to improve your emails, one change at a time, and boost engagement and conversions:

Identify the problem and set a goal

Start by reviewing your email metrics.

- Are open rates low?

- Are clicks lagging?

Pick one clear issue to focus on. Then set a specific goal, like “increase opens by 10%” or “boost click‑through rate.” Clear goals help avoid random, unfocused tests.

Form a hypothesis

Based on your analysis, make an educated guess about what might improve results. For example:

- “Adding the subscriber’s name to the subject line will increase opens.”

- “Moving the CTA button to the top will increase clicks.”

Decide what metric you’ll track to measure success.

Create Variations and Set Up The Test

Build a control email (A) and a variation (B) that changes only one element, like a different subject line or button placement. Randomly split your list into equal groups to ensure a fair test.

Most email platforms, Mailchimp, ActiveCampaign, Klaviyo, HubSpot, Encharge, and Mailjet, offer easy drag-and-drop A/B testing tools.

Run the Test Long Enough

Give your test enough time to gather meaningful results. Avoid ending it too early. Look at past campaigns to estimate when most subscribers engage.

It is recommended to use a sample size of at least 10,000 recipients for reliable results. Avoid testing multiple changes at once, or you won’t know what caused the difference.

Analyze Results and Act

Compare performance using your chosen metric. If one version clearly wins, send it to the rest of your list. If results are unclear, revise your hypothesis and run another test.

Over time, repeated tests build a better understanding of your audience and steadily improve engagement and conversions.

Best Practices for Successful Split Testing

Following established guidelines improves the reliability of your experiments:

- Test frequently but smartly: Focus on the emails you send most often, such as newsletters, onboarding messages, or promotions. Regular testing helps you learn faster and improve results over time.

- Change only one thing at a time: Keep everything else the same while you test a single element, such as a subject line or CTA button. This way, you’ll know exactly what caused any change in performance.

- Randomize and segment your list: Split your email list at random and ensure both groups are similar in size and makeup. Avoid testing only your most engaged users, as this can give skewed results.

- Be patient for results: Don’t pick a winner too early. Let enough time pass and collect enough responses to see a clear difference.

- Keep records: Write down your hypothesis, what you tested, and the results. Tracking your tests helps you learn from past experiments and plan future ones more effectively.

- Avoid common mistakes: Don’t test too many things at once, ignore sample size, or end tests too quickly. Remember, not every test will be a big win—but each one teaches you something valuable.

- Make testing part of your routine: Share results with your team and show how small changes can improve performance. Collaborate with designers, copywriters, and analysts to make testing a regular part of your email strategy.

How Split Testing Increases Conversions

Implementing split testing may seem like a small procedural change, but it can dramatically increase your return on investment.

Here’s how that process directly lifts conversions:

You Learn What Gets Attention

Small changes, a punchier subject line, a friendlier “from” name, or a clearer preview text, can mean more people open the email.

You Make Clicks Easier And Clearer

Testing button text, placement, or even using a button versus a text link shows what makes people click. A clearer, stronger CTA turns casual readers into clickers.

You Match Message To Audience

Split tests reveal tone, offers, and imagery your audience likes. Over time, you tune your emails to feel more relevant, and relevant messages convert better.

You Reduce Wasted Changes And Costs

Instead of rolling out big, risky changes to everyone, you validate improvements on a slice of your list first. That saves money and avoids hurting results.

Mini example (simple math):

- List size: 10,000 subscribers.

- Current conversion rate: 2.0% → 200 conversions.

- Variant improves conversion to 2.6% → 260 conversions.

That’s 60 extra conversions. If the average order value is $50, that’s an extra $3,000 in revenue (60 × $50 = $3,000). Small percentage gains add up fast.

You Build Repeatable Wins

Every test teaches you something. Keep a record, and patterns emerge, copy that works, layouts that convert, times that perform. Those repeatable lessons let you scale improvements across campaigns.

Tools and Technologies for Email Split Testing

Most modern email service providers include built‑in A/B testing capabilities. Here are some popular options:

Mailchimp

Offers easy A/B testing of subject lines, content blocks, and send times. Users can test up to three variables and choose the winning version based on open or click‑through rates.

ActiveCampaign

Provides split testing for campaigns, automated workflows, and landing pages. You can test emails, SMS, and automation sequences, with detailed reports on engagement metrics.

Mailjet

Includes A/B testing across subject lines, content, images, and CTAs, along with deliverability insights and statistical significance calculations.

Klaviyo

Popular among e‑commerce brands for its robust testing features, including multivariate tests and segmentation by customer behavior.

HubSpot

Enables A/B testing within marketing emails, workflows, and landing pages, and integrates results with its CRM for deeper analysis.

Encharge

Focuses on marketing automation for SaaS companies. Its 2026 guide stresses that split testing is not a one‑off but a continuous process; Encharge automates variant creation and reporting to streamline experimentation.

These platforms typically let you choose the size of your test group (often 10–20% of your list), specify the variable to test, and define the winning metric.

Once the system determines a winner, it automatically sends the remaining subscribers the better‑performing version. Additionally, AI‑powered testing is gaining traction.

Final Thoughts

Now that you know what is split testing in email marketing, remember to use it to make smart, data-driven decisions that increase engagement, clicks, and conversions.

By testing subject lines, preview text, sender names, content, and CTAs, one change at a time, you can steadily improve every part of your emails.

Even small improvements add up. Each test teaches you more about what your subscribers like, helping you send emails that feel personal, relevant, and easy to act on.

While not every experiment will be a big win, consistent testing creates a feedback loop that sharpens your strategy and helps your brand stand out in a crowded inbox.

Modern email platforms and AI tools make it easier than ever to set up tests, analyze results, and act on insights quickly.

The result? Higher open rates, more clicks, better conversions, and ultimately, more revenue, all without guessing.

Start testing today, track your results, and use what you learn to refine your emails continuously. Over time, even small changes can turn into big growth for your business.